#ROC CURVE IN SPSS 20 CODE#

Note that the following code snippet does not use the same logic or code as fbroc and that the R code is much slower.įpr = sum(!true.class & (pred > thresholds)) / n.neg Remember, that if we have n positive samples, for any cutoff the TPR must be a multiple of 1/n. Let us first focus on the combinations of TPR and FPR that are actually achieved for the given data. Calculating TPR and FPR for all cutoffs present in the data More interestingly, the bug led me to consider some conceptual difficulties with the construction of ROC curves when ties are present. Instructions for the installation can be found here. I have already fixed this bug in the development version, so if you have ties in your predictor I recommend trying the development version instead of fbroc 0.1.0. savefig ( 'multiple_roc_curve.While working on the next version of fbroc I found a bug that caused fbroc to produce incorrect results when the numerical predictor variable includes ties, that is, if not every value is unique. Use the following code to export the figure. predict_proba ( X_test ) fpr, tpr, _ = roc_curve ( y_test, yproba ) auc = roc_auc_score ( y_test, yproba ) result_table = result_table.

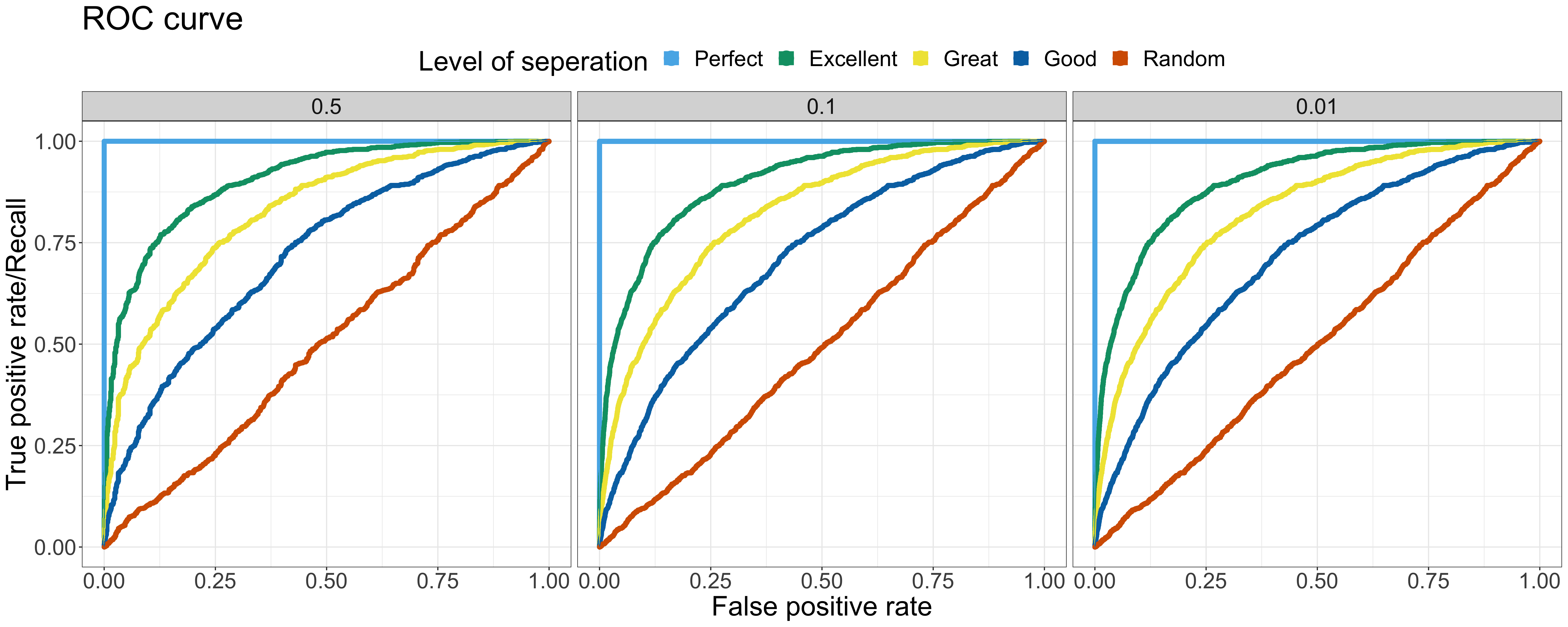

fit ( X_train, y_train ) yproba = model. DataFrame ( columns = ) # Train the models and record the resultsįor cls in classifiers : model = cls. # Import the classifiersįrom sklearn.linear_model import LogisticRegression from sklearn.naive_bayes import GaussianNB from sklearn.neighbors import KNeighborsClassifier from ee import DecisionTreeClassifier from sklearn.ensemble import RandomForestClassifier from trics import roc_curve, roc_auc_score # Instantiate the classfiers and make a listĬlassifiers = # Define a result table as a DataFrame Here, we’ll train the models on the training set and predict the probabilities on the test set.Īfter predicting the probabilities, we’ll calculate the False positive rates, True positive rate, and AUC scores. Train the models and record the results.Instantiate the classifiers and make a list.25, random_state = 1234 ) Training multiple classifiers and recording the results target X_train, X_test, y_train, y_test = train_test_split ( X, y, test_size =. filterwarnings ( 'ignore' ) Loading a toy Dataset from sklearn from sklearn import datasets from sklearn.model_selection import train_test_split data = datasets. Importing the necessary libraries import pandas as pd import numpy as np % matplotlib inline import matplotlib.pyplot as plt import seaborn as sns sns. We’ll use Pandas, Numpy, Matplotlib, Seaborn and Scikit-learn to accomplish this task. Plotting multiple ROC-Curves in a single figure makes it easier to analyze model performances and find out the best performing model. You may face such situations when you run multiple models and try to plot the ROC-Curve for each model in a single figure. The ROC curve is plotted with False Positive Rate in the x-axis against the True Positive Rate in the y-axis. Higher the AUC, better the model is at predicting 0s as 0s and 1s as 1s.

It tells how much model is capable of distinguishing between classes. ROC is a probability curve and AUC represents the degree or measure of separability. AUC-ROC curve is a performance metric for binary classification problem at different thresholds.